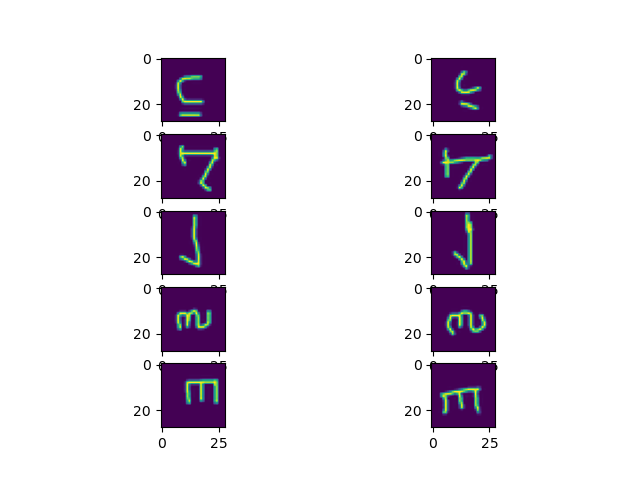

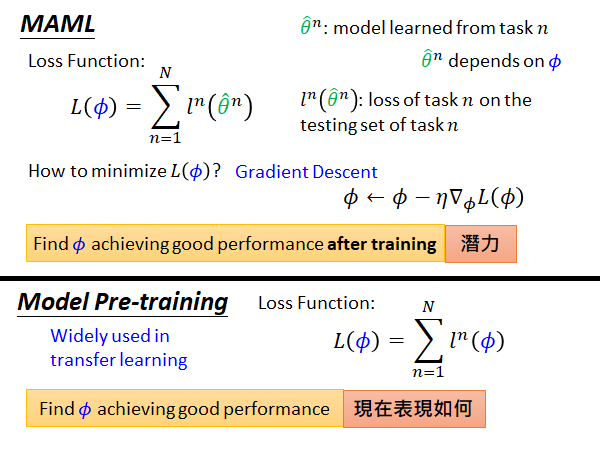

In this section we’ll discuss three related methods that are superior for the few-shot scenario. In the first approach (“learning to initialize”), we explicitly learn networks with parameters that can be fine-tuned with a few examples and still generalize well. We might either (i) fine-tune this network using the few-shot data, or (ii) use the hidden layers as input for a new classifier trained with the few-shot data. Unfortunately, when training data is really sparse, the resulting classifier typically fails to generalize well. Then we adapt this network for the few-shot task. Perhaps the most obvious approach to few-shot learning would be transfer learning we first find a similar task for which there is plentiful data and train a network for this. In part II we will discuss the remaining two families that exploit prior knowledge about learning, and prior knowledge about the data respectively. We also discussed the family of methods that exploit prior knowledge of class similarity. Prior knowledge of data: We exploit prior knowledge about the structure and variability of the data and this allows us to learn viable models from few examples. Prior knowledge about learning: We use prior knowledge to constrain the learning algorithm to choose parameters that generalize well from few examples. Prior knowledge about class similarity: We learn embeddings from training tasks that allow us to easily separate unseen classes with few examples. Note that simply using g 1 g_1 g 1 (which corresponds to k = 1 k=1 k = 1) yields no progress as predicted for this task since zero-shot performance cannot be improved.In part I of this tutorial we argued that few-shot learning can be made tractable by incorporating prior knowledge, and that this prior knowledge can be divided into three groups: Including more gradients yields faster learning, due to variance reduction. g 2 g_2 g 2 corresponds to first-order MAML, an algorithm proposed in the original MAML paper. The figure below shows the learning curves on Omniglot obtained by using each sum as the meta-gradient. In the figure below, assume that we perform k steps of SGD on each task using different minibatches, yielding gradients g 1, g 2, …, g k g_1, g_2, \dots, g_k g 1 , g 2 , …, g k . Our analysis of Reptile suggests a plethora of different algorithms that we can obtain using different combinations of the SGD gradients. Reptile also converges to the solution faster, since the update has lower variance. In our experiments, we show that Reptile and MAML yield similar performance on the Omniglot and Mini-ImageNet benchmarks for few-shot classification. Our analysis suggests that Reptile and MAML perform a very similar update, including the same two terms with different weights.

This finding may have implications outside of the meta-learning setting for explaining the generalization properties of SGD. We show that the Reptile update maximizes the inner product between gradients of different minibatches from the same task, corresponding to improved generalization. To analyze why Reptile works, we approximate the update using a Taylor series. Reptile requires Error in LaTeX ' k>1 ': KaTeX parse error: Expected 'EOF', got '&' at position 3: k&̲gt 1, where the update depends on the higher-order derivatives of the loss function as we show in the paper, this behaves very differently from k = 1 k=1 k = 1 (joint training). when the output labels are randomly permuted). While joint training can learn a useful initialization in some cases, it learns very little when zero-shot learning is not possible (e.g. If k = 1 k=1 k = 1, this algorithm would correspond to “joint training”-performing SGD on the mixture of all tasks.

It is at first surprising that this method works at all. As an alternative to the last step, we can treat Φ − W \Phi - W Φ − W as a gradient and plug it into a more sophisticated optimizer like Adam.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed